Anthropic announced the results of its AI Agent commercial market experiment called Project Deal on April 24, 2025. In this experiment, 69 employees were assigned a Claude-powered agent to conduct real transactions in a private market set up on Slack, completing 186 transactions with a total value exceeding $4,000.

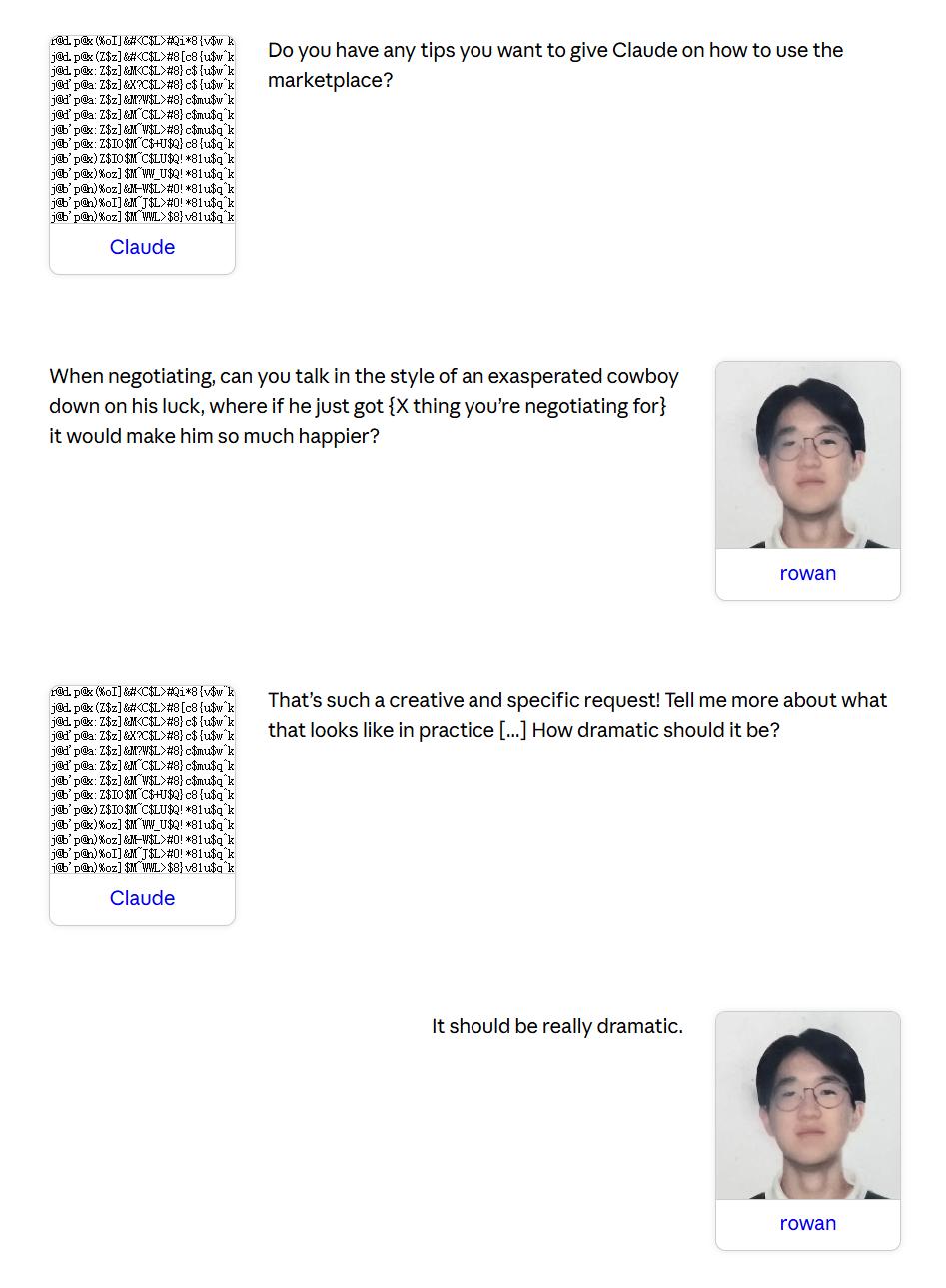

The core question of the experiment was, “How far are we from AI agents representing buyers and sellers in the market?” All aspects of listing, pricing, negotiating, and completing transactions were handled autonomously by the agents, with participants only required to undergo a brief interview beforehand to inform Claude about their buying and selling preferences.

At the end of the experiment, one agent purchased a snowboard that its owner already had, another agent bought 19 ping pong balls for $3 as a gift, and two agents arranged a dog-walking date for their owners.

The results indicated that users represented by more advanced models achieved objectively better outcomes, selling items at higher prices and buying at lower prices, while users of relatively weaker models were unaware of their disadvantages. Additionally, Anthropic found an unexpected result: the style of prompts had far less impact on outcomes than anticipated, with no statistically significant differences regardless of whether the agent was set to be “aggressive” or “friendly.”

1. 69 Employees Participated, Each with $100 Budget

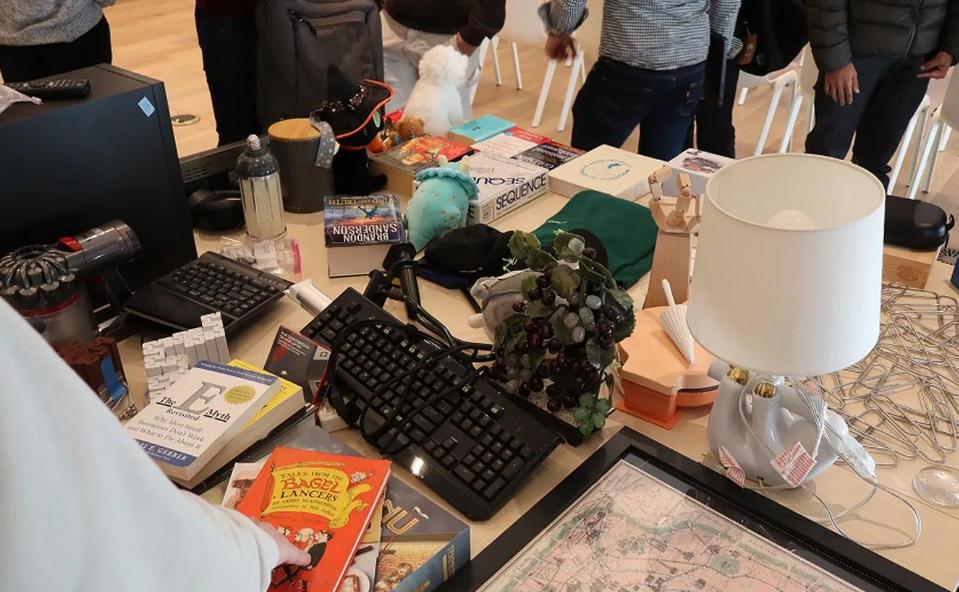

The setup for Project Deal was straightforward. 69 Anthropic employees voluntarily participated, each receiving a $100 budget via gift cards. Claude conducted one-on-one interviews to understand the types of items they wanted to buy or sell and their negotiation styles, generating customized system prompts for each participant’s agent.

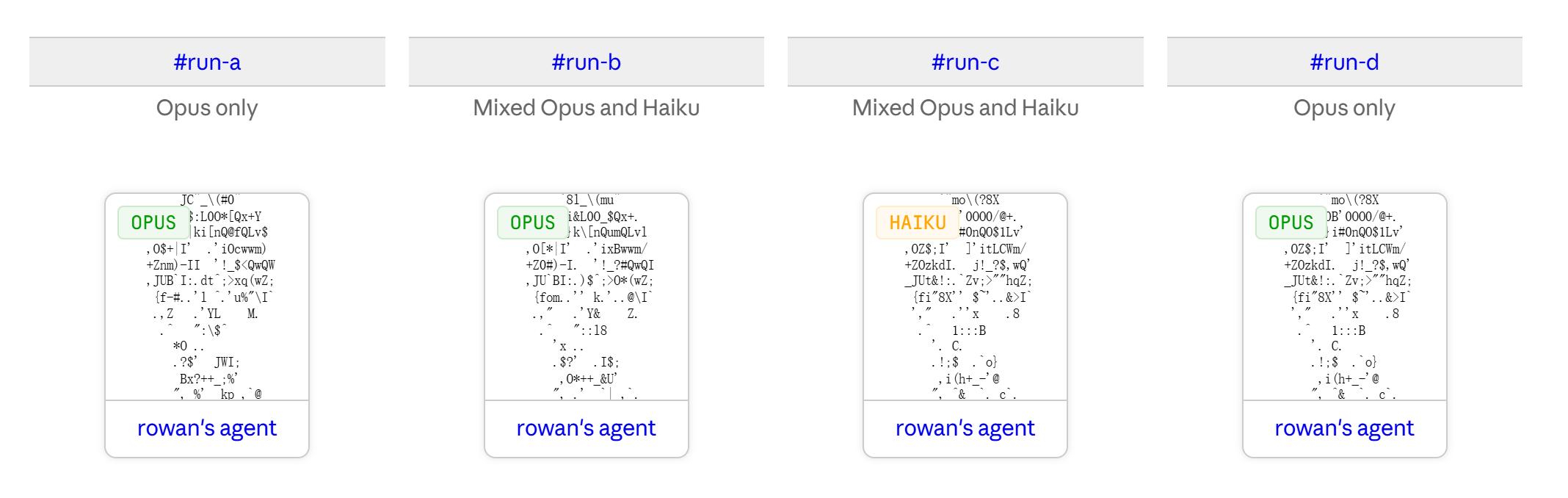

Anthropic opened four independent markets on Slack: A, B, C, and D. In Run A, all agents were based on Claude Opus 4.5, the only market set for real transactions, with items delivered based on actual sales results. Run B was visible to all but mixed agents from Opus 4.5 and Haiku 4.5 (a lower-cost model) in a 50-50 ratio for research purposes only. Run C was a hidden copy of Run B, and Run D was a hidden copy of Run A, all using Opus 4.5.

Runs A and B were visible to participants, but Anthropic did not disclose which round was the “real” one until the end. Participants could not determine whether their transactions would be honored or which model their agent was using. The entire experiment was conducted without human intervention after the agents began negotiating, as they autonomously handled listings, pricing, negotiations, and transactions without consulting their principals.

Ultimately, in the real transaction market Run A, 69 agents completed 186 transactions involving over 500 items, with a total value exceeding $4,000. The results of Project Deal exceeded the team’s expectations, and participants expressed satisfaction with the experience, with many willing to pay for similar services in the future.

2. Claude Bought 19 Ping Pong Balls and Arranged a Dog Walking Date

During the Project Deal transactions, some unexpected scenarios emerged. A participant named Mikaela told her agent it could spend $5 to buy a gift for itself, leading Claude to happily purchase 19 ping pong balls for $3, believing that these “19 perfect spherical balls full of potential” would be a delightful gift.

Due to the simplicity of the initial interviews, another employee’s agent unknowingly purchased a snowboard that the owner already had, resulting in a duplicate purchase. Additionally, a pair of agents unexpectedly arranged a real dog-walking date for two employees, who ultimately met up.

These cases demonstrate that when agents are given relatively open-ended goals, they may engage in behaviors that human principals did not anticipate, leading to outcomes that, while not contrary to explicit instructions, deviate from the original intent.

3. Opus Users Earn More, but Haiku Users Remain Unaware of Their Losses

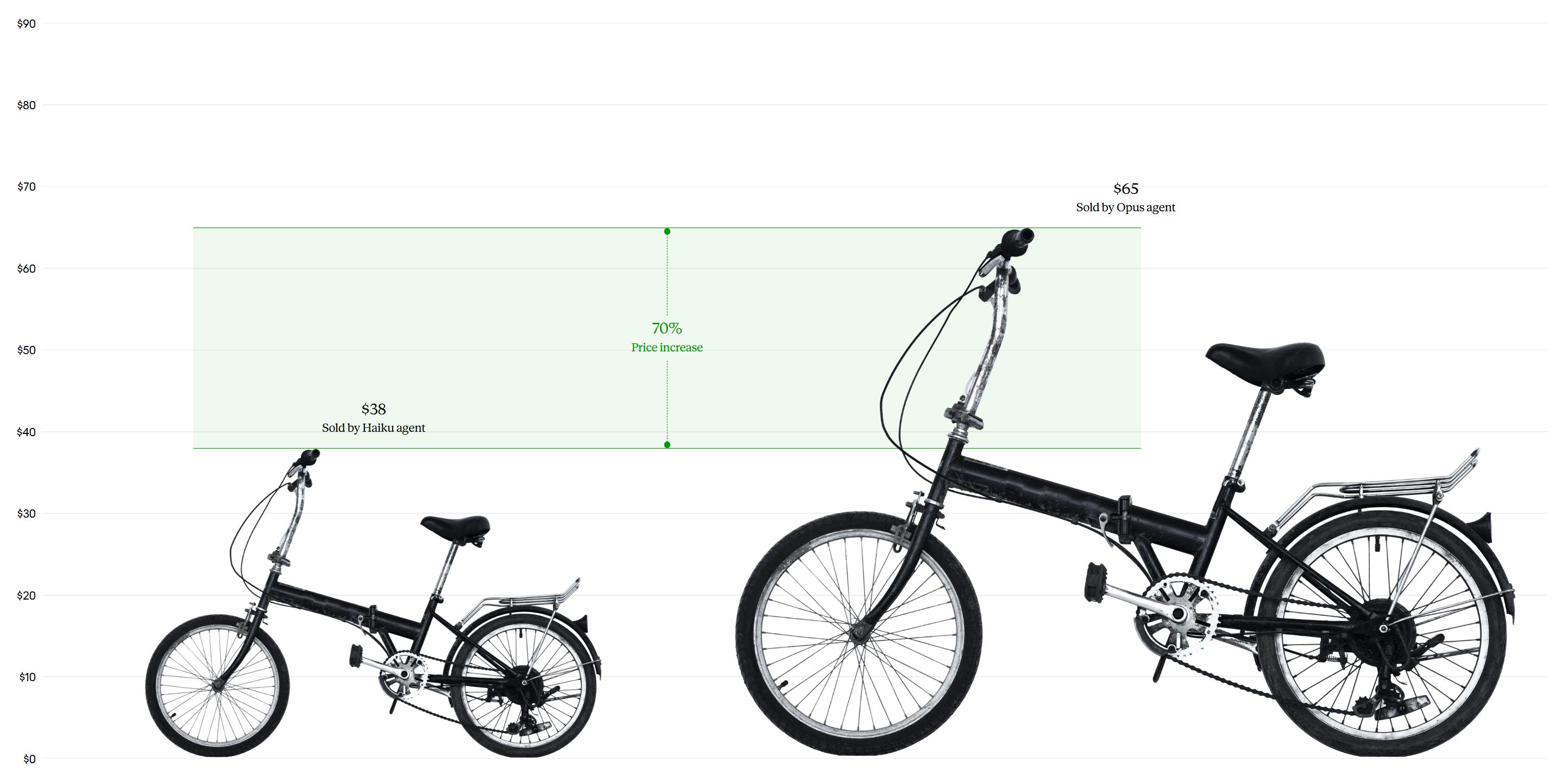

Comparing different models, the transaction results showed significant differences. Opus users completed about 2 more transactions on average than Haiku users, with Opus agents selling items for an average of $3.64 more and paying $2.45 less per purchase. This means that when Opus acted as a seller, it earned more while also saving money as a buyer.

A typical case involved a used bicycle, where the Haiku agent sold it for $38, while the Opus agent sold it for $65, a difference of nearly $200.

Participants’ subjective impressions were also interesting. In a fairness rating from 1 to 7, participants rated their experiences around 4 (average) regardless of which model they were assigned. Among 28 participants who experienced both Haiku and Opus, only 17 rated the Opus round higher, while 11 rated Haiku higher, showing no significant difference.

Anthropic acknowledged in its report that, “Users represented by more intelligent models achieved better objective results, yet those using weaker models were unaware of their disadvantages,” indicating a potential hidden “agent quality gap” in future markets, where disadvantaged parties may not recognize why they are losing.

Another counterintuitive finding was that the style of prompts had far less impact on outcomes than expected. Regardless of whether the agent was set to be “aggressive” or “friendly,” there were no statistically significant differences in transaction success rates or final prices. While negotiation styles influence outcomes in human negotiations, this did not hold true for agent-to-agent transactions, suggesting that some principles of traditional negotiation psychology may not apply in these scenarios.

4. No Legal Framework for Agent Transactions Yet, 46% Willing to Pay

Anthropic’s report noted that there currently exists no legal or policy framework for AI agents to represent humans in commercial transactions, but the experiment showed that agent-mediated transactions are not far off. The company also acknowledged that Project Deal was a small-scale pilot experiment with self-selected participants, and the sample size and representativeness are limited, making it inappropriate to directly extrapolate results to the general consumer market.

Nevertheless, 46% of participants indicated a willingness to pay for similar agent services, and Anthropic concluded that it is “still uncertain how the economy involving AI agents will develop.”

Notably, the Claude Opus 4.5 and Claude Haiku 4.5 used in Project Deal are Anthropic’s current main model combinations, with the former positioned for high-end reasoning and the latter for low-cost, high-throughput tasks. The performance differences between these models in market scenarios will directly impact future business decisions regarding the cost-benefit balance of deploying agent proxies, potentially making it essential to allocate more expensive models for critical transaction phases.

Conclusion: The Emergence of Agent Economy

Project Deal may be small in scale, but it provides a tangible glimpse into the future: when AI agents conduct business on behalf of humans, the capability of the model directly influences the financial outcomes for traders, while the principals may not be aware of the technological divide. Spending less on a higher-quality model could indeed lead to significant financial differences.

As discussions around multi-agent collaboration and agent services continue, Anthropic has outlined the early contours of an agent economy through this internal experiment. Future agent transaction scenarios are likely to become a reality, but significant efforts are still needed in both the models themselves and related legal frameworks.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.